Technology

How To Create The Perfect Digital Human

The Rise of Digital Humans In recent years, digital humans have become increasingly popular in various industries, from entertainment to customer service. There have been rapid advances in digital human platforms which offer increasingly lifelike capabilities and rich functionality. So it’s easier to create a digital human with current technologies but like any conversational AI…

Read MoreOpenAI Launches ChatGPT API

OpenAI has finally launched the ChatGPT API For some time, companies have claimed to use ChatGPT in their technology stack, something we’ve highlighted in the past. In reality, they were using GPT-3.5, also known as the davince-003 model. While this model was an improvement from GPT-3, it lacked the fine-tuning conversational capabilities of ChatGPT that…

Read MoreMust-Read Books To Learn Conversation Design

Introduction Conversation design is a critical aspect of creating successful digital humans, chatbots, and voice assistants. The field of conversation design involves designing the flow and structure of interactions between users and these digital entities. Designing a truly engaging conversation, that works, requires skill. There is a lot to learn as it’s a complex and…

Read MoreBuild a Custom AI-Powered GPT3 Chatbot For Your Business

The Rise Of ChatGPT ChatGPT has rapidly gained popularity worldwide, with millions of users relying on its vast knowledge database and ability to hold a multi-turn conversation of considerable complexity. However, despite its usefulness for general information, ChatGPT is limited to pre-2021 publicly available internet data, and it has no access to your private data…

Read MoreWhat’s The Difference Between ChatGPT & GPT3?

ChatGPT Confusion At the time of writing, since the launch of ChatGPT at the end of November 2022, numerous solutions have hit the market claiming to be ChatGPT-branded. However, I’m here to clarify that these solutions are not ChatGPT, but rather GPT3 solutions. There seems to be a lot of confusion between ChatGPT and GPT3.…

Read MoreWhat is ChatGPT?

AI-Mazing ChatGPT is the latest technology release from the team at OpenAI and it’s taken the internet by storm. Reactions ranged from amazement to scepticism, with everything in between. It’s been hugely popular with more than a million people using it in the first 5 days. ChatGPT is OpenAI’s latest large language model, released on…

Read MoreHow Much Does it Cost To Build a Chatbot in 2023?

Tips. Insight. Offers. Are You In? Your Email Address Please enter a valid email address. I agree that The Bot Forge can email me news, tips, updates & offers. I know that I can unsubscribe at any time. You must accept the Terms and Conditions. Sign Me Up Thank you for subscribing! Something went wrong.…

Read More3 Books That Will Boost Your Chatbot Knowledge

Introduction Currently, chatbots are dominating online markets, especially in countries such as the U.S., India, Germany, Brazil, and the UK. According to a Business Insider article on chatbot statistics, 40% of internet users worldwide prefer chatbots over virtual agents because they get answers quickly and more conveniently due to their 24-hour service. Due to the…

Read MoreBuckinghamshire Business Festival Sponsor 2021

We are proud to be a Buckinghamshire Business Festival sponsor this year We are proud to be sponsoring the 2021 Buckinghamshire Business Festival, running from April 19th – 30th. The festival has been organised by Buckinghamshire Business First, with a packed schedule of events and opportunities to make new connections across the two weeks. Look…

Read MoreA Guide to Conversational IVR

So what is conversational IVR (Interactive Voice Response) and why should businesses care. According to Forrester Research, customers expect easy and effective customer service that builds positive emotional connections every time they interact with a brand or organization. For businesses improving their organization’s customer experience is a high priority. Additionally, 40% of surveyed business leaders…

Read MoreYouTube Adds Voice Search & Commands

YouTube uses voice search technology to augment it’s website user interface. We covered this concept briefly in our What Can We Expect From Conversational AI In 2021 post. One of our predictions for 2021 was the rise in the popularity of adding voice capabilities to software products. Specifically leveraging this sort of technology in touch…

Read MoreWhat Can We Expect From Conversational AI in 2021?

The use of conversational AI will continue to rise Yes we are going to see continued growth in conversational AI in 2021. It’s predicted that 1.4 billion people will use chatbots on a regular basis with $5 billion projected to be invested in chatbots by 2021. Voice assistant use will also grow. The use of…

Read MoreDialogflow CX Now Has a Free Trial

It was pretty exciting when Dialogflow CX was announced back in September 2020. We talked about it briefly here. However, Dialogflow CX can be pricey because it’s based on the edition and the requests made during the month (a request being a call to the DF service via an API call or by using the…

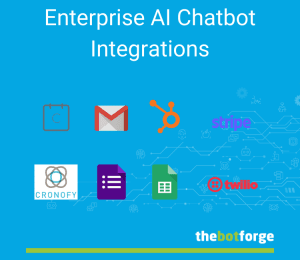

Read MoreEnterprise AI Chatbot Integrations

The chatbots we create at The Bot Forge can do anything. We talk a lot about the chatbots themselves, NLP, Entities, Sentiment Analysis, Machine Learning, Training; all the good stuff which we leverage to make the optimum chat experience for our clients However, sometimes we don’t cover what goes on under the hood to ensure…

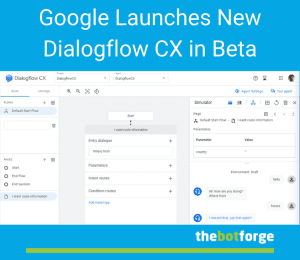

Read MoreGoogle Launches New Dialogflow CX in Beta

Google has beta launched a new version of it’s Dialogflow natural language understanding (NLU) platform: Dialogflow CX. The Dialogflow version we all know and love is now called Dialogflow ES. The new Dialogflow Customer Experience (CX) platform is aimed at building advanced artificial intelligence agents for enterprise-level projects at a larger and more complex scale…

Read More